LeapFive News

A New Breakthrough in RISC-V Computing! Yueshiang AMC Brings DeepSeek’s Large Language Model Right into Your Pocket.

Release time:

2025-03-15

From “cloud-based queuing” to “having a personal co-pilot”—edge AI can do it all!

Is the DeepSeek Q&A response timing out again?

“No data center, no server—can a single USB drive really power the DeepSeek large language model?” Yes, we’ve made it happen!

Yue Fang Technology , as a leading domestic RISC-V architecture SoC chip company , successfully will Local Deployment of DeepSeek Large Models , to The USB drive form factor is redefining how AI computing is used.

AMC (AI Mini Computer) Powered by Yuefang Technology Self-developed RISC-V chip Built for direct USB connection to your host device, it’s compatible with multiple operating systems and supports the deployment of localized AI inference applications—such as vision-based applications powered by traditional neural networks. Now, AMC has further enhanced its capabilities with large-model applications, enabling cloud-independent operation that’s immune to network fluctuations. It’s plug-and-play, ready for instant inference at any time. Transform your ordinary PC into an AI workstation in seconds, keeping AI always on standby and ready to respond swiftly—becoming your personal AI co-pilot!

*AMC Live Shot | Your Personal Smart Assistant in Your Pocket

A small body holds great opportunities—moving from “usable” to “easy to use.”

🔵 Plug and play No server required—just plug in via USB and complete deployment in 10 seconds.

🔵 3W ultra-low power consumption , Both computational performance and the model itself have room for optimization.

🔵 Local private knowledge base Protect data privacy, carry it with you, and keep it secure and under control.

🔵 Web page localization access (Beta version): Compared to queuing in the cloud and waiting for responses, your AI assistant is always online.

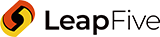

*Models that have already been successfully deployed and are in operation

UI interface: The design is simple and intuitive, allowing users to get started quickly without any complicated configurations—less is more.

OTA + Scenario-Based Models: Pioneering a New Paradigm for Edge AI Computing!

As AI computing moves toward multi-scenario applications today, Developers hope to train and deploy models with greater flexibility, while users look forward to AI that can precisely meet their personalized needs. However, traditional AI computing relies on the cloud, and issues such as high computational costs, network latency, and data privacy make it difficult to truly deploy AI in diverse edge applications.

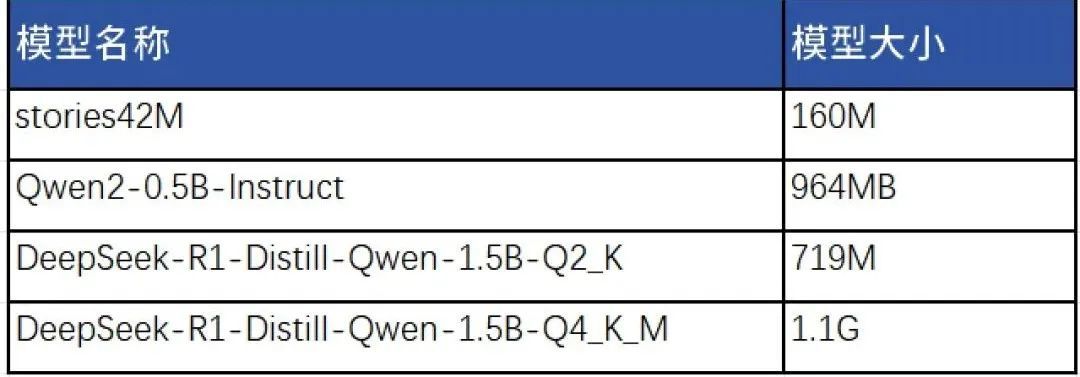

The process of deploying DeepSeek to AMC has also prompted us to engage in deeper reflection and led to the following discoveries: DeepSeek’s miniaturization, low-cost, and edge-device OTA (Over-the-Air) upgrade capabilities have the potential to become a brand-new approach for deploying edge AI computing applications. Drive AI computing from the cloud to the edge, enabling AI to adapt more precisely and flexibly to localized applications.

🔹 “Large and all-encompassing” models are not suitable for edge AI applications; instead, we should develop models that are more tailored, more refined, and more efficient according to the specific needs of each scenario.

The core of edge AI computing lies in precisely addressing specific scenario-based challenges. But where do the right models come from? We believe that by establishing an open, scenario-specific model marketplace, we can attract more developers to join in and train more sophisticated AI models tailored to diverse needs—such as education, industry, office environments, and personal assistants—thus better aligning with local, scenario-specific application requirements.

🔹 OTA has the potential to become the standard approach for dynamic adaptation in AI scenarios.

Traditional OTA is used solely for remote software upgrades. However, if we consider edge AI applications that are scenario-based, OTA will need to incorporate an additional capability for on-demand retrieval and deployment of AI models. This will enable edge devices—despite their constrained hardware resources—to deliver a superior AI interaction experience through dynamic model selection.

🔹 There will also be opportunities for innovation in business models, and the edge AI ecosystem will create greater value.

This new paradigm—“OTA + Scenario-Based Models”—will make it feasible to build an open, subscription-based, and iteratively enhanced model marketplace for edge AI computing. Users can try out AI models at low cost and then subscribe on demand, while developers can offer their AI models through the marketplace and continuously refine the model experience based on user feedback, thus creating a positive feedback loop for edge AI computing and accelerating the practical deployment of AI at the edge.

We believe that future AI computing shouldn't be confined solely to the cloud—rather, it should truly belong to individuals!

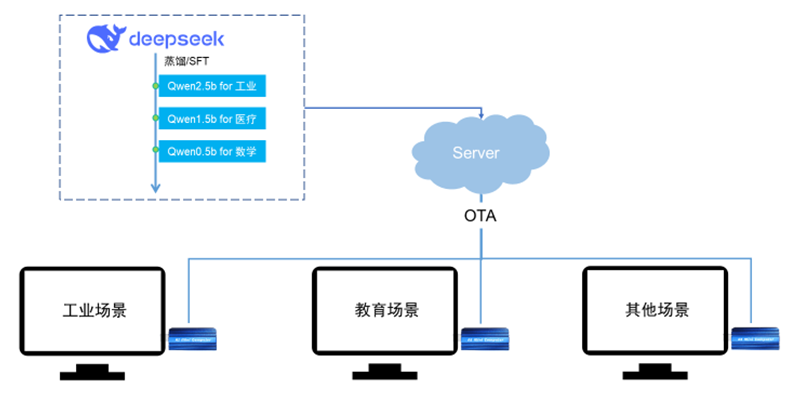

For individual users, dedicated AI can become a long-term partner for personal growth.

Everyone’s knowledge, experience, and decision-making style are unique. Therefore, AI applications should also be capable of delivering personalized experiences. AMC’s portability enables AI to adapt to users’ needs across various scenarios, providing instant responses and intelligent assistance. At the same time, through long-term data storage and continuous learning, AMC steadily accumulates and refines its capabilities, gradually building a system that is uniquely tailored to each individual. Digital Twins (Digital Twins) Let AI computing truly understand, continuously grow, and become a long-term intellectual asset for individuals.

🔹 Runs offline and can be called anytime across multiple scenarios. Whether for office work, study, or industrial applications, AI is plug-and-play and does not rely on the cloud.

🔹 Storage and Playback Leveraging the AMC’s native 32GB storage capacity, data generated during use can be stored locally or actively synchronized to a personal host, thereby creating a personalized AI memory.

🔹 Let AI models grow in a personalized way. Become the “smartest assistant who understands you best” and respond instantly anytime, anywhere.

Conceptual diagram

From “large and comprehensive” to “small and refined,” we’ve seen AMC’s potential in the AI computing field, which holds promise for making AI computing more precise and more efficient. Scenario-based models and OTA updates will enable developers to build AI applications faster and allow users greater freedom in their AI usage, driving AI computing toward a shift from general-purpose to customized solutions, from fixed to flexible architectures, and from single-use to multi-faceted applications—truly empowering countless industries and sectors.

Yuefang Technology, leveraging the RISC-V computing architecture, continuously explores innovative integrations of chips, edge AI computing, and intelligent connectivity, accelerating the advancement of domestically developed, independent AI computing. We believe that in the future, AI computing will no longer be an expensive resource but rather a universally accessible capability. Personalized intelligence is the core driving force propelling the world toward a smarter future!

AMC is now open for trials and welcomes all kinds of business discussions.

📞 Consult now to get a personalized AI computing solution!

Phone: +86-17727560859 (direct line to professional team)

*Some features mentioned in this article are still under development and optimization at various stages: features such as web page loading speed and lightweight AI model adaptation are currently being refined and will continue to be upgraded and improved. Meanwhile, knowledge base storage and the OTA subscription model remain at the concept-validation stage; in the future, we will gradually explore their practical implementation based on technological advancements. Currently, AMC is adapted to lightweight AI models, while full-scale large-model inference capabilities are still under exploration. We welcome developers and users alike to join us in testing and providing feedback, helping to drive the continuous evolution of the AI computing ecosystem.

News

Video